Three Ways to Scale Your Existing BI Business Intelligence System

Three Ways to Scale Your Existing BI Business Intelligence System

Business Intelligence (BI) systems can take a lot of computing horsepower, and most organizations try to deploy as much horsepower as possible when they implement a BI solution. Unfortunately, BI is one of those things that makes its users want more. More reports. More dashboards. More data. And all that “more” means you’ll eventually need another “more…” computing power. In other words, eventually you’ll need to scale your BI system to handle… well, more.

There are two broad techniques for scaling: Up and out.

Scaling BI “Out”

Scaling out generally means enlisting more servers to support the overall solution. Typically, you do that by looking at the specific services provided by BI, and moving some of those services to different machines. For example, perhaps your BI system serves different distinct audiences within your organization, and each could now benefit from having their own servers dedicated to their BI tasks. Or, perhaps your BI system consists of many different components, which can be separated out onto different servers. Exactly what’s possible, and how you do it, depends a lot on how that BI system was architected in the first place, and on how your users are utilizing it. Make no mistake: Scaling out can be painful. It almost always involves some re-architecting, and with some systems it may not be practical.

Scaling BI “Up”

That’s why scaling up is often the first choice for an organization. Scaling up simply means moving the BI system to a bigger, more powerful server, or upgrading the existing server. When you do so, there are definitely some general guidelines to follow:

- BI systems that rely heavily on in-memory analysis will get the biggest benefit from more memory and more processor power. Generally speaking, the more RAM you can throw at such a solution, the better. When it comes to processors, more is generally preferable to faster. In other words, four processor sockets sporting four cores apiece would usually be preferable to a smaller number of sockets or cores running at a slightly higher gigahertz apiece.Unfortunately, servers rarely have room for more processors, and can rarely even accept faster processors. A processor upgrade generally involves a whole new server, which gets expensive. Start, then, by considering a RAM upgrade, since servers are usually a bit more flexible in allowing more memory to be added.

- BI systems that rely heavily upon a data warehouse tend to be impacted the most by disk performance. Getting faster disk drives – that is, disks with a higher rotational speed – can make a difference, but not often a huge one. Instead, try to get more disks. Disk speed usually comes down to how fast magnetic bits can be read off the spinning platters, so more platters – that is, more physical drives in an array – usually results in more bits coming off faster.That said, there’s a limit to how much even a decent array can pump out. You might also look into solid-state disk (SSD) caching systems. The most effective ones I’ve seen take the form of a piece of software running on your database server, with SAS- or PCIe-attached SSDs in the 150GB to 300GB range. I’ve seen that simple (and inexpensive – think around $8,000 per database server) upgrade deliver as much as 3x faster performance under the same workload.

In BI, Bigger is Usually Better

No matter which kind of BI system you have – data warehouse, in-memory analytics, or a hybrid system that uses both – more computing resources will usually deliver improved performance and the ability to handle greater workload. More processors. More disks. More memory. They’ll all contribute significant improvements.

If getting more means buying a whole new server, buy big. Make sure you’ve got capacity for a lot of memory, and get as many processor cores installed into your new server as you can possibly afford. An investment here will pay off in the form of a longer-lasting server with the ability to scale up to meet ever-higher demand.

Don Jones

PowerShell and SQL Instructor – Interface Technical Training

Phoenix, AZ

You May Also Like

A Simple Introduction to Cisco CML2

0 3901 0Mark Jacob, Cisco Instructor, presents an introduction to Cisco Modeling Labs 2.0 or CML2.0, an upgrade to Cisco’s VIRL Personal Edition. Mark demonstrates Terminal Emulator access to console, as well as console access from within the CML2.0 product. Hello, I’m Mark Jacob, a Cisco Instructor and Network Instructor at Interface Technical Training. I’ve been using … Continue reading A Simple Introduction to Cisco CML2

Creating Dynamic DNS in Network Environments

0 645 1This content is from our CompTIA Network + Video Certification Training Course. Start training today! In this video, CompTIA Network + instructor Rick Trader teaches how to create Dynamic DNS zones in Network Environments. Video Transcription: Now that we’ve installed DNS, we’ve created our DNS zones, the next step is now, how do we produce those … Continue reading Creating Dynamic DNS in Network Environments

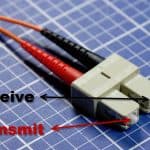

Cable Testers and How to Use them in Network Environments

0 731 1This content is from our CompTIA Network + Video Certification Training Course. Start training today! In this video, CompTIA Network + instructor Rick Trader demonstrates how to use cable testers in network environments. Let’s look at some tools that we can use to test our different cables in our environment. Cable Testers Properly Wired Connectivity … Continue reading Cable Testers and How to Use them in Network Environments